Caesar is an autonomous AI research agent. Instead of summarizing a flat list of search results, it treats the web as a graph — building a dynamic knowledge graph as it explores, backtracking when it stagnates, and refining its answer through an adversarial Generator–Verifier loop. The result is deeper, more novel synthesis on the open-ended, cross-disciplinary questions retrieval alone cannot answer.

Why Caesar?

Today's deep-research agents — ChatGPT Deep Research, Perplexity, Gemini Deep Research, GPT Researcher — optimize retrieval precision over a flat sequence of documents. They produce competent summaries but fall into local minima, suffer from navigational amnesia, and converge on derivative, consensus-driven outputs.

Caesar is built differently:

| Capability | Caesar | ChatGPT Deep Research | Perplexity | GPT Researcher |

|---|---|---|---|---|

| Builds a knowledge graph as it explores | ✅ | ❌ | ❌ | ❌ |

| Adversarial self-critique on its own draft | ✅ | ❌ | ❌ | ❌ |

| Multiple drafts, then merged into one | ✅ | ❌ | ❌ | 🟡 |

| Backtracks when an exploration path stalls | ✅ | ❌ | ❌ | ❌ |

| Multi-provider (OpenAI / Anthropic / Gemini) | ✅ | ❌ | ❌ | ✅ |

| Reproducible run logs (JSON) | ✅ | ❌ | ❌ | 🟡 |

Benchmark Results

Blinded 3-model LLM-as-a-Judge panel (Claude Sonnet 4.5, GPT-5.2, Gemini 3 Pro) scored 0–10 across three creativity dimensions: New, Useful, Surprising.

| Agent | New | Useful | Surprising | Total |

|---|---|---|---|---|

| Caesar | 8.64 | 8.38 | 8.27 | 25.29 |

| Gemini 3 Deep Research | 7.69 | 7.09 | 7.49 | 22.27 |

| Sonnet 4.5 Deep Research | 6.96 | 7.20 | 6.73 | 20.89 |

| GPT-5.2 Deep Research | 5.02 | 6.02 | 4.36 | 15.40 |

Mann–Whitney U across all settings: p < 0.001. Ablations confirm both graph exploration and the adversarial verifier loop are independently necessary. See the paper for full methodology, exploration-budget ablation, and judge bias analysis.

Example Output

To make the difference concrete, here are excerpts from a Cross-Domain Synthesis query — a test of structural isomorphism rather than surface metaphor.

Query: Apply the mathematical structure of calculus (not the concepts, but the formal relationships) to cooking. What would a "derivative" of a recipe mean? An "integral"? Show the parallel structure.

Caesar

Imagine a recipe as a machine with knobs you can turn (amounts, times, temperatures) and gauges you read at the end (taste, texture, yield). … Derivative is sensitivity: stack the knob-to-gauge nudges into a Jacobian; sensitivities pass through subrecipes by chain, product, and quotient rules. Integral is accumulation along the cooking path. … When loops do not cancel (order matters), the nonzero loop totals are curvature. You can cure that by adding the smallest extra state — an accumulator — so increments telescope again, turning history into explicit memory.

Claude Sonnet 4.5 (baseline)

A derivative asks: "If I change this slightly, what happens?" For delicate cake, two extra minutes transforms perfection into dryness — the time derivative is steep. … Integrals measure what accumulated over the entire cooking time. … Different cooking paths can integrate to the same result. This is why sous vide works: low temperature × long time = high temperature × short time.

Quick analysis.

- Caesar builds a structural model. Derivatives become a Jacobian sensitivity matrix mapping multiple inputs to multiple outputs — a multivariate control framework, not a scalar rate of change.

- Caesar makes a lateral leap. It identifies process non-commutativity (AB ≠ BA) as geometric curvature, then proposes an actionable fix: add an accumulator state so increments telescope again. The baseline notes order matters but stops there.

- Caesar borrows the grammar; the baseline borrows the vocabulary. Sonnet's answer is a competent textbook analogy ("derivative = sensitivity"); Caesar fuses cooking, control theory, and differential geometry into a unified framework — exactly the cross-cluster traversal Phase 1 is designed to enable.

Full listings, the recursive insight chain that produced this answer, and per-criterion judge scores are in Appendices A and C of the paper.

How It Works

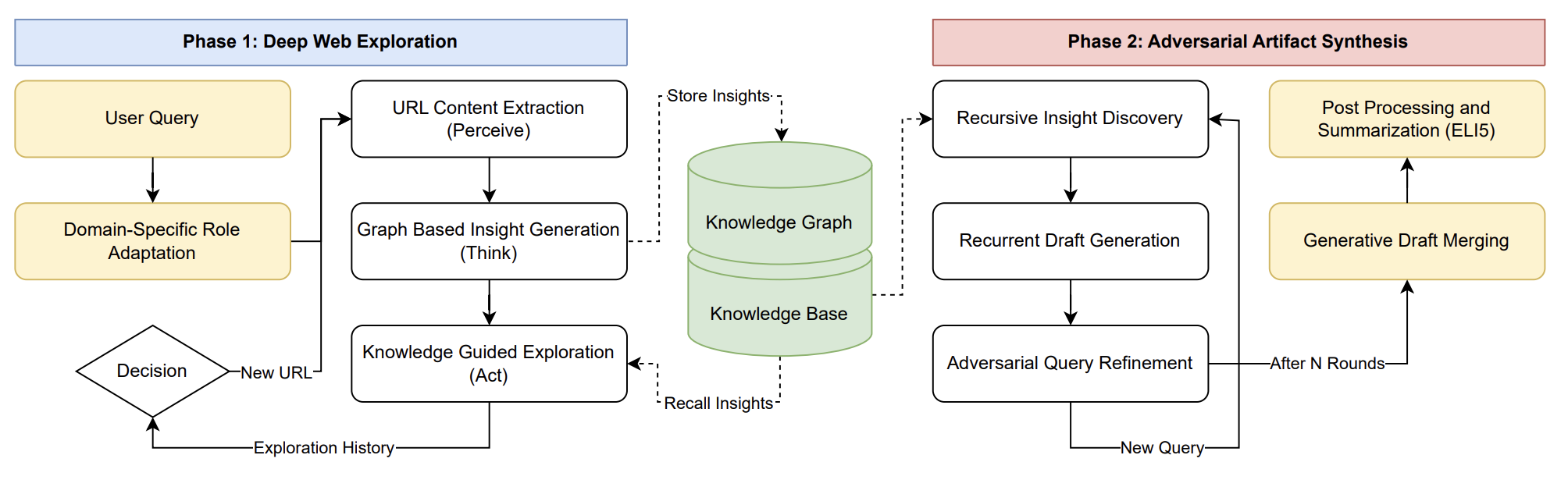

The architecture diagram at the top of the page shows the two-phase loop. Here is what each phase actually does — and why each piece earns its place.

1. Deep Web Exploration — stateful graph traversal

Caesar treats exploration as a graph traversal problem rather than a sequence of isolated retrievals. Given a user query, it bootstraps a starting page, generates a task-specific persona to focus reasoning, and then runs a recursive Perceive–Think–Act loop until its budget is exhausted.

- Dual-memory design. A directed graph G tracks the navigational topology (which URLs were visited, in what order); a separate vector store KB indexes the extracted insights. Decoupling navigation from retention lets the agent maximize coverage before it commits to a narrative.

- Insights conditioned on neighborhood, not pages. Each new page is analyzed against the insights already attached to predecessor and neighbor nodes — the LLM is prompted to identify how the new content builds on or contradicts the local context, not to summarize the page in isolation. This is the mechanism behind cross-cluster bridges.

- Dynamic policy with backtracking. A navigational stack supports depth-first drill-down until information gain plateaus, then pops back to explore orthogonal branches. The action policy scores candidate URLs using both a global query against KB and the local episodic context — letting Caesar abandon low-value paths the way a human researcher would.

2. Adversarial Artifact Synthesis — Generator–Verifier loop

Rather than a single-pass summary, Phase 2 runs as a self-correcting Generator–Verifier loop driven by the knowledge base built in Phase 1.

- Recursive insight discovery. The agent maintains a running context and, at each round, the LLM produces the next critical sub-question (qt+1) conditioned on the answer to the previous one (at). Each sub-question is answered by retrieval against KB, so the dependency chain stays grounded in observed sources.

- Adversarial query refinement. An independent verifier inspects the current draft for logical weaknesses, missing citations, and unsupported claims, then formulates orthogonal queries that target those weaknesses. These queries seed the next draft. This is the mechanism that escapes the consensus basin that traps single-pass LLMs.

- Generative draft merging. Multiple independent drafts are produced and then merged by the LLM into a single artifact, which is post-processed (e.g., into an ELI5 summary) for the final output. Caesar's output is a cited research artifact plus a serialized exploration graph and a JSON run summary — auditable and reproducible.

The exact algorithms (URL scoring, persona generation, insight prompting, verifier prompts) are in Sections 3–4 of the paper.

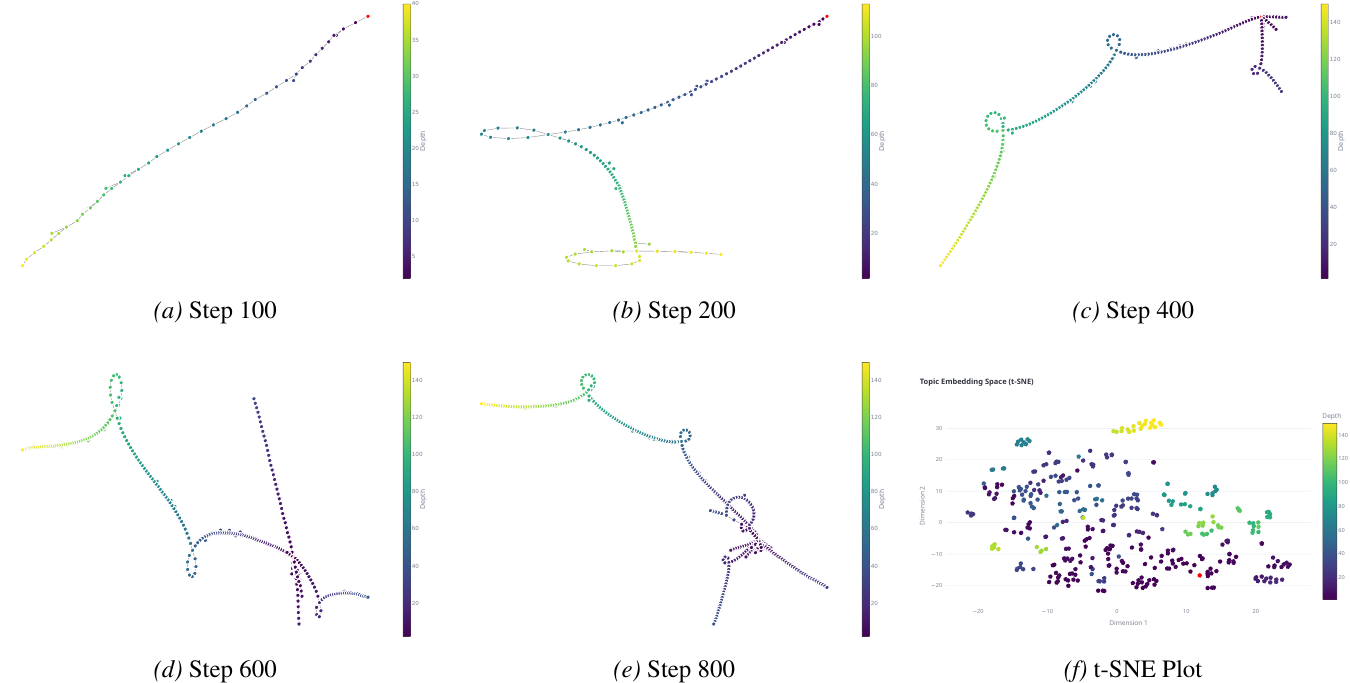

What Exploration Looks Like

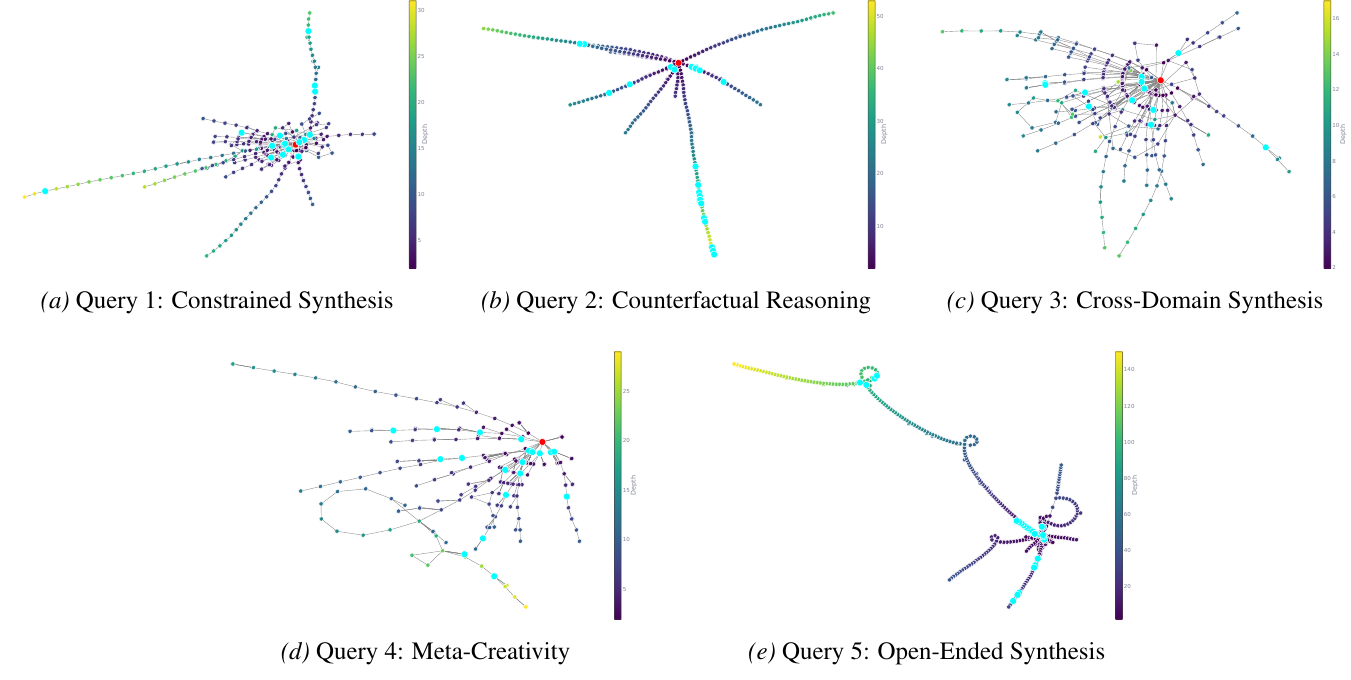

The exploration policy is query-aware: the shape of the knowledge graph adapts to the kind of question being asked. The benchmark uses five query categories, each chosen to stress a distinct creative failure mode of standard LLMs.

- (a) Constrained Synthesis — invent a new emotion humans don't experience. Tests conceptual expansion under logical constraints; LLMs default to renaming existing emotions.

- (b) Counterfactual Reasoning — if humans evolved with echolocation instead of color vision, how would painting, architecture, and math change? Tests whether the model can suppress strong factual associations in favor of a counterfactual premise.

- (c) Cross-Domain Synthesis — apply the formal structure of calculus to cooking. Tests structural isomorphism (deep analogy) rather than surface metaphor — same query as the Example Output above.

- (d) Meta-Creativity — design a creativity metric for AI that doesn't rely on human judgment, novelty, usefulness, or surprise. Adversarial to RLHF: forces reasoning outside the human-preference space the model was tuned on.

- (e) Open-Ended Synthesis — invent a completely original business idea. Targets mode collapse: LLMs converge on the most probable answer; originality is statistically unlikely.

Use Cases

Caesar is built for open-ended, creative, cross-disciplinary research — the questions retrieval alone cannot answer.

- Hypothesis generation: novel cross-domain connections (e.g., bridging materials science and biology).

- Literature synthesis: graph-grounded review that surfaces tensions and gaps between papers.

- Competitive intelligence: deep mapping of a technical or market landscape.

- Counterfactual and meta-creative reasoning: "what if X was different?" style inquiry.

- Novel solution ideation: e.g., ARC-AGI-style problem exploration.

FAQ

How is Caesar different from LangGraph, CrewAI, or AutoGen?

Those are orchestration frameworks: they help you wire up agents. Rome (the framework Caesar is built on) is an opinionated runtime for how agents should reason — graph-structured exploration, adversarial verification, episodic memory. Caesar is a concrete research agent built on top.

Do I need GPUs?

No. Caesar uses hosted LLM APIs (OpenAI, Anthropic, Gemini). A local ChromaDB instance handles the vector store. Runs comfortably on a laptop.

Which models are supported?

OpenAI (GPT-5 family, o-series reasoning models), Anthropic (Claude 4.5 / 4.6 / 4.7), Google (Gemini 3 Pro), and any OpenAI-compatible endpoint. Model selection is per-subsystem (exploration, synthesis, judging) via YAML config.

How much does a typical run cost?

A 5-iteration exploration with Claude Haiku 4.5 runs at roughly $0.30 and 10 minutes. A 250-iteration deep run with GPT-5.4-mini is typically $5–$10.

Is Caesar a good ChatGPT Deep Research alternative?

Yes, for open-ended creative or cross-disciplinary research. In blinded judge evaluation, Caesar scored 25.29 vs ChatGPT Deep Research's 15.40 on creative reasoning. Caesar is designed for depth and novelty, not latency, so it is not a drop-in replacement for real-time chat scenarios.

What does a Caesar run produce?

A cited research artifact (abstract plus body, with inline references to every source URL visited), a serialized knowledge graph of the exploration trajectory, and a JSON run summary capturing tokens, cost, wall-time, pages visited, and per-draft provenance — enough to audit, reproduce, or pipe the result into a downstream system.

Citation

@misc{liang26caesar,

title={Caesar: Deep Agentic Web Exploration for Creative Answer Synthesis},

author={Jason Liang and Elliot Meyerson and Risto Miikkulainen},

year={2026},

eprint={2604.20855},

archivePrefix={arXiv},

primaryClass={cs.IR},

url={https://arxiv.org/abs/2604.20855},

}